The European AI Act

First binding and harmonized legal framework in the world dedicated to artificial intelligence

European regulation is directly applicable in all Member States

Risk-based approach used

Objective: to reconcile innovation, safety, fundamental rights, and trust

Key Dates

April 2021

Proposition du règlement IA par la Commission européenne

December 2023

Final political adoption of the text

2024–2025

Transition phase: preparation, guidelines, authorities

August 2, 2026

Full and mandatory application in the EU

Concerned Actors

The regulation also applies to non-EU actors if the AI produces effects within the Union

Providers

Developers, publishers, and designers of AI systems

Deployers / Users

Companies and administrations using AI

Importers & Distributors

Responsible for placing systems on the market

Control Authorities

National and European supervision authorities

Obligations —Providers/suplliers

Classify the system according to risk level

Design in accordance with the requirements of the regulation

Provide technical documentation and traceability

Conducting conformity assessments prior to placing products on the market

Post-marketing

surveillance

Obligations — Deployers/Users

Use AI in accordance with its intended purpose

Put in place human supervision

Manage risks and incidents related to systems

Inform users when required

ISO/IEC 42001 – AI management system

The ISO/IEC 42001 standard provides a structured framework for AI system governance, facilitating risk management, documentation, and continuous improvement

It represents a relevant methodological starting point, but does not in itself constitute proof of legal compliance with the AI regulation

Obligations — Importers & Distributors

Cooperate with the authorities during

inspections

Inform users when required

Regarding individuals

The AI Regulation does not apply to natural persons using an AI system for strictly personal and non professional purposes

An individual may be classified as a deployer when their

use exceeds the private sphere

What will change from August 2, 2026

Mapping

Requirement to identify and classify AI systems by risk level

Integrated compliance

Integrate AI in accordance with GDPR, cybersecurity, and data governance requirements

Responsibility

Increased responsibilities for user companies

Transparency

Enhanced information and documentation requirements for high risk AI (mainly established by the supplier prior to placing on the market or putting into service)

Sanctions

Significant financial penalties of up to 7% of global turnover or €35 million in the event of non-compliance

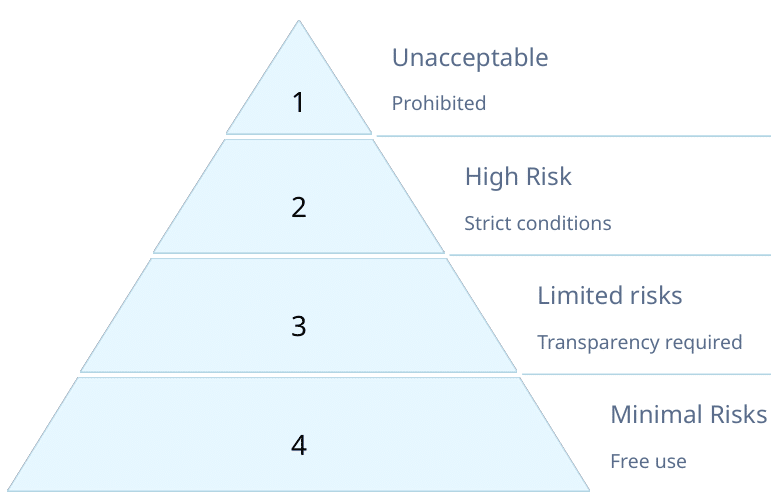

Risk-based approach — Four levels

The diagram shows the risk hierarchy and the main implications for each level.

Details by risk level

Unacceptable

• Behavioral manipulation (AI that analyzes your emotions and adapts messages to push you to buy something without you realizing it)

• Exploitation of vulnerabilities.

→ • Mass biometric recognition (permanent surveillance of crowds without any specific legal reason)

Prohibited

High risk

• Recruitment (AI that sorts resumes or ranks candidates for a position)

• Healthcare (AI that assists in making medical diagnoses)

• Education (a system that ranks students according to their performance)

• Justice (AI that helps estimate the risk of recidivism)

• Essential services permitted under strict conditions (data governance, human

supervision, documentation).

Limited risk

• Chatbots,

• content generators

→primary obligation of transparency (informing the user)

Minimal risk

• Office AI, games,

• filters,

→simple recommendations

free use, without specific obligations

Impact on businesses & next steps

• A new layer of compliance to anticipate now

• Convergence of GDPR, AI, cybersecurity, and risk governance

• A key role for lawyers, DPOs, and compliance officers

• An increase in AI audits and controls