Lessons from the Anthropic Dispute

The recent clash between Anthropic and the U.S. Department of War is an important early signal for everyone working in data privacy and AI governance. While public debate focuses on AI in military use, the deeper story for compliance leaders is the use of a “supply chain risk” designation for a domestic AI vendor over contractual guardrails.

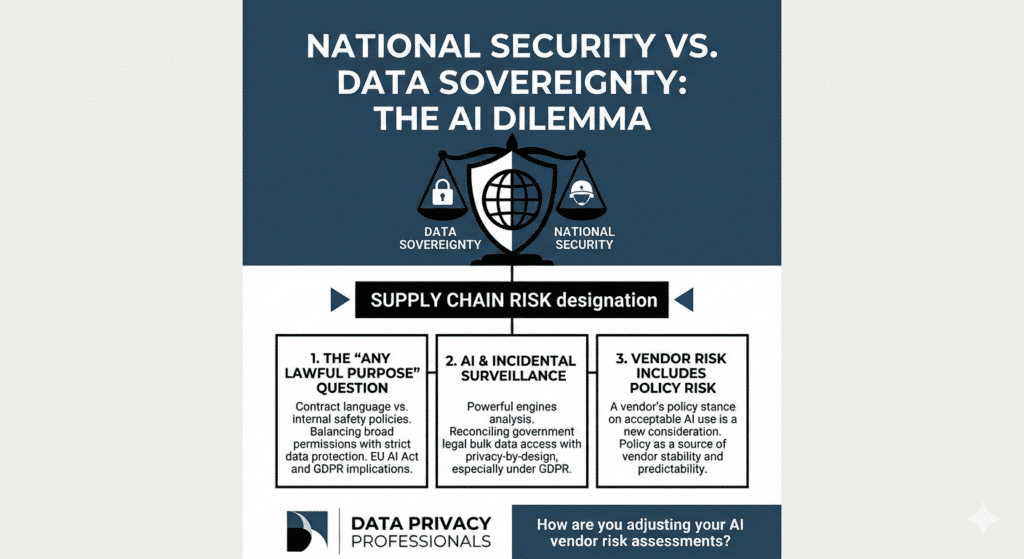

For the first time, a U.S.-based AI company has reportedly been treated as a supply chain concern not because of a known security breach, but because it resisted broad “all lawful purposes” language and sought to limit certain surveillance and autonomous use cases in its government contracts. This is a meaningful shift for how ethics, safety, and national security intersect in procurement.

Why this matters for Privacy & Compliance

The “Any Lawful Purpose” Question

Governments are increasingly seeking AI contracts that permit “any lawful purpose,” which may go beyond a provider’s own acceptable-use or safety policies. For those managing GDPR or upcoming EU AI Act obligations, this raises a practical question: how do we reconcile a government’s definition of “lawful” processing with stricter data protection and fundamental rights standards, especially in cross-border or joint-controller scenarios?

AI and Incidental Surveillance

In an environment where governments can legally acquire certain categories of bulk data from data brokers, powerful AI systems can become the analytical engine that links and interprets that data at scale. If an AI provider has limited ability to constrain such use, it becomes significantly harder for DPOs and compliance teams to ensure privacy‑by‑design and proportionality in line with GDPR principles.

Vendor Risk Now Includes Policy Risk

The Anthropic case illustrates that a vendor’s policy stance on acceptable uses of AI can itself become a source of uncertainty. If a provider can be restricted or deprioritized for maintaining stricter guardrails, organisations need to consider not only technical and security criteria, but also the stability and predictability of a vendor’s regulatory and policy exposure over time.

At Data Privacy Professionals, we believe that well‑designed privacy and safety guardrails should not be treated as a purely negotiable line item. As AI capabilities develop faster than many legal frameworks, our contracts and governance playbooks are often the most immediate protections we have. It is worth investing the effort to ensure those protections are robust, realistic, and aligned with both legal requirements and organisational values.

How are you adjusting your AI vendor risk assessments in light of developments like Anthropic’s dispute?